The Data Science Behind the Headline: Unpicking the impact of COVID-19 from the UK’s largest datasets

24 August 2022

We speak to Chris Tomlinson and Johan Thygesen about their latest paper, what it’s like to handle data from over 57 million people, and what they think the future of health data research will look like.

Introduce yourselves

Johan (J): I’m Johan, lecturer at the Institute of Health Informatics. My research focuses on electronic health records and genetics.

Chris (C): And I’m Chris, an anaesthetics registrar and first year PhD student in AI enabled healthcare at the UCL Institute of Health Informatics.

Tell us about your latest paper, which looked at how over 57 million people in England progressed through the healthcare system during the COVID-19 pandemic

J: In this paper, we combined all the available data sources on COVID-19 – covering over 57 million people in the UK – to map out the events an individual might experience during the pandemic. For example, from early diagnosis by a PCR test, to perhaps becoming hospitalised and receiving some sort of ventilatory support, to death in some cases.

We used this to understand how infections and individual people’s need for healthcare might have changed between the first two waves of the pandemic, but also how they differed between different demographic groups and ethnicities.

Why is it useful to look at diseases like COVID-19 in this way?

C: When you look at just one dataset, you only capture a snapshot of an individual’s journey with a disease. But because we united lots of different datasets, like from GP surgeries and hospitals, and on testing, vaccination and mortality, we could follow how an individual moves between these different datasets – or, in reality, how they progress through different stages of the illness.

Using this approach means we’ve been able to identify people that might have fallen through the cracks of other analyses. Like the 10,884 COVID-19 cases that were identified from death records alone. These people didn’t have any other COVID-19 related healthcare events registered before dying from COVID-19, like a positive test or hospitalisation. They represent a significant group of individuals who we wouldn’t have been able to analyse without taking this approach of uniting multiple datasets.

What was the hardest part of the project?

J: There was a lot of data to handle so we spent several months at the start of the project making sure we combined all the datasets in a meaningful way and that we were getting the information we needed out of them.

To do this, we defined ten COVID-19 phenotypes that reflected different stages of disease severity – which are like computable definitions of disease. These could then be applied to the datasets to unpick how different groups of people flowed through the healthcare system, and whether there were any patterns related to ethnicity, for example.

C: Some of the maybe more traditional research methods just don’t work on data this big. So developing the phenotypes was really technical at times, and was a learning curve for all of us.

Luckily, we had the support of the CVD-COVID-UK/COVID-IMPACT Consortium, through our funders the BHF Data Science Centre, which meant we were able to kind tackle the challenge as a community of researchers, researchers, alongside NHS Digital’s excellent team of data wranglers.

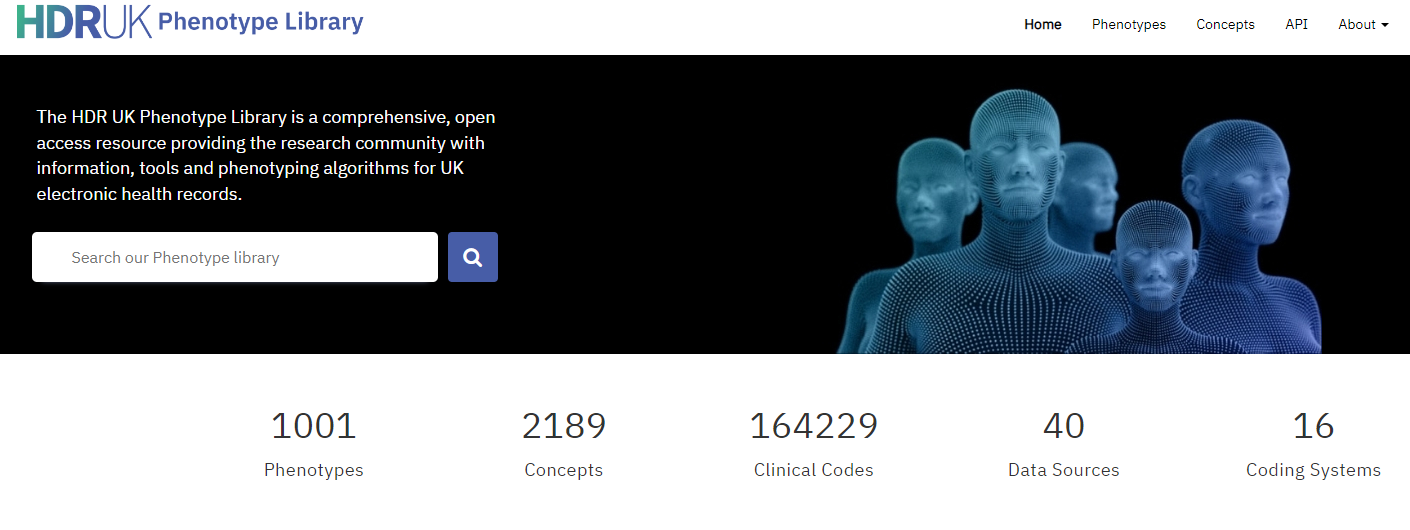

We’ve now made our phenotypes available to any researcher via the HDR UK Phenotype Library, so others can make use of them for future work. I’m proud to say our phenotypes have already enabled other researchers to produce impactful analyses assessing the association of COVID-19 vaccines with major venous, arterial, and thrombocytopenic events, the use of antithrombotic medication on COVID-19 outcomes, and studying the incidence of vascular diseases after COVID-19 infection.

What’s one thing that surprised you about your results?

J: To some extent, it was the sheer number of people who died during this pandemic really shocked me. It’s easy to tune out the numbers when you hear them on the news, but when you sit and produce these tables yourself, it really brings it home.

What’s the biggest misconception about what you do as a data scientist?

J: Maybe that we spend all our time producing nice graphs. Whereas in reality, the vast majority of our work is on the back end – getting all the data together, doing the quality controls, finding errors, and correcting things. It takes a long time before you can actually start producing the graphs.

Anything that wouldn’t surprise us at all about what you do?

C: There’s a lot of staring at screens!

What do you think will be different about your area of work in 10 years?

C: It feels like we’re at a bit of a turning point with access to health data in the UK. A lot of progress was made during COVID-19 to improve how data can be securely accessed by researchers.

But there’s also been cases where it’s not been communicated well to the public about how their data is being used. If we lose the public’s trust in this, that may jeopardise a lot of the progress that’s been made in enabling health data research.

I hope in 10 years’ time, the research community has been able to collectively demonstrate the value of using patient data at scale. And for this to expand to include a lot more of the data that’s routinely collected but not brought together at scale. For example, patient blood test results, which would have been really valuable for this sort of COVID-19 work, but are not routinely made available as it stands.

I hope our work can help form a small part of this.

Will anything be the same in 10 years?

J: There’s always a temptation to develop tools that make our work easier, so that you could say, do a ‘plug and play’ analysis on big datasets. But I think there will always be a need to do the manual scrutiny, and really take the time to understand the data and check it’s ok for the purpose.

I think that need for scrutiny will always remain, which I see as a positive thing. Because even though it can be messy and frustrating, it’s a big responsibility.